Introduction

Hiring bias has historically influenced who receives professional opportunities and who is overlooked. As artificial intelligence becomes deeply embedded in recruitment processes a critical question emerges can technology truly create fairness or does it simply reproduce human flaws in a digital form. This article explores whether AI bias in hiring can realistically be reduced through automation or whether deeper structural issues continue to shape outcomes. Understanding this debate is essential as organizations increasingly rely on AI driven hiring systems to make critical decisions.

Table of Contents

What Is Bias in Hiring

Bias in hiring refers to unfair decision making during recruitment that is influenced by subjective judgments rather than objective qualifications. These biases often stem from unconscious assumptions personal preferences or stereotypes that affect how candidates are evaluated. Even structured hiring systems can unintentionally favor certain groups.

Common examples include cultural assumptions where communication styles or accents are incorrectly equated with competence academic bias that favors elite institutions gender bias affecting leadership evaluations and racial or ethnic bias linked to names or backgrounds. Such practices limit opportunity and reduce fairness.

Bias does not only harm individuals but also weakens organizations. Research consistently shows that diverse teams outperform homogeneous ones in creativity innovation and problem solving. When bias excludes capable candidates companies lose competitive advantage particularly in fields driven by innovation.

In this sense AI bias in hiring is not just an ethical issue but a strategic concern for modern organizations.

How AI Is Used in Hiring Today

Artificial intelligence already plays a role across multiple hiring stages. Resume screening tools analyze large volumes of applications by identifying keywords skills and experience aligned with job descriptions. This reduces manual workload and speeds up shortlisting.

AI powered video interview tools assess speech patterns facial expressions and behavioral signals. While controversial these systems claim to standardize candidate evaluations. Predictive analytics tools analyze historical performance data to forecast candidate success in specific roles.

Automated skill assessments further allow candidates to complete technical and situational tests scored instantly by algorithms. Together these tools aim to improve efficiency consistency and scalability. The core concern remains whether AI bias in hiring is reduced or unintentionally reinforced by these systems.

Also Check: AI ROI for Small Business Growth and Performance

Can AI Actually Remove Bias

AI has the potential to improve fairness through consistency. Unlike humans algorithms do not experience fatigue emotional influence or personal preferences. They apply evaluation criteria uniformly across candidates.

AI can also identify success predictors beyond traditional credentials uncovering talent from non conventional backgrounds. However AI systems learn from historical data. If past hiring decisions contained bias the algorithm may replicate or amplify it.

This makes data quality critical. Biased training data produces biased outcomes. Another challenge is the black box nature of complex models which makes decisions difficult to explain. Lack of transparency raises accountability concerns.

Explainable AI attempts to address this by clarifying why decisions were made. Instead of unexplained rejections candidates can receive reasoning based on skills or experience gaps.

AI bias in hiring therefore depends on design oversight and governance. AI can reduce some subjective bias but cannot eliminate it without careful human supervision.

Types of Bias AI May Address

Resume Bias

AI can anonymize resumes and focus on skills achievements and experience rather than names schools or job titles reducing unconscious assumptions.

Interview Bias

Standardized AI driven interviews can minimize appearance or accent based judgments though safeguards are essential to avoid introducing new bias.

Performance Prediction Bias

By relying on measurable indicators AI shifts evaluation from assumptions to data driven evidence.

Cultural Fit Bias

Rather than enforcing sameness AI can identify complementary skills and perspectives promoting diversity.

These applications demonstrate how AI bias in hiring can be mitigated when systems are responsibly designed.

Benefits of Using AI in Hiring

When implemented carefully AI offers clear advantages:

Consistency in evaluations

Scalability across large applicant pools

Objectivity through data driven criteria

Efficiency by automating repetitive tasks

Improved diversity outcomes through broader talent discovery

The benefits are significant but only when balanced with ethical safeguards.

Challenges and Risks of AI in Hiring

Despite its promise AI introduces new risks.

Data Bias

Algorithms trained on biased historical data can unintentionally favor specific groups.

Lack of Transparency

Opaque decision making reduces trust and accountability.

Ethical Concerns

Automated analysis of speech or facial expressions raises privacy questions.

Legal and Compliance Risks

Discriminatory outcomes may violate employment laws leading to regulatory scrutiny.

Managing AI bias in hiring requires awareness of these challenges.

How to Reduce Bias in AI Hiring Systems

Organizations can take practical steps:

Use diverse representative datasets

Conduct regular third party bias audits

Maintain strong human oversight

Continuously monitor and update models

Establish clear governance and ethical policies

Combining technical controls with human responsibility is essential.

Overview Table

| Area | Key Insight |

| AI Role | Resume screening interviews prediction |

| Main Benefit | Efficiency and consistency |

| Core Risk | Embedded data bias |

| Key Solution | Human oversight and audits |

| Future Direction | Explainable ethical AI |

Tech 2 Innovation Role:

Tech2innovation contributes to this discussion on AI bias in hiring by presenting a balanced and research driven explanation that goes beyond surface level claims. This post on tech2innovation connects real world hiring practices with ethical and technical realities of AI adoption. By breaking down benefits risks and mitigation strategies tech2innovation helps readers understand both the promise and limitations of AI in recruitment. The article emphasizes accountability transparency and governance which are often overlooked in similar discussions. Tech2innovation ensures readers gain clarity on how AI should assist rather than replace human judgment. This approach supports informed decision making for organizations and professionals navigating AI driven hiring.

Future Directions Can AI Achieve Fair Hiring

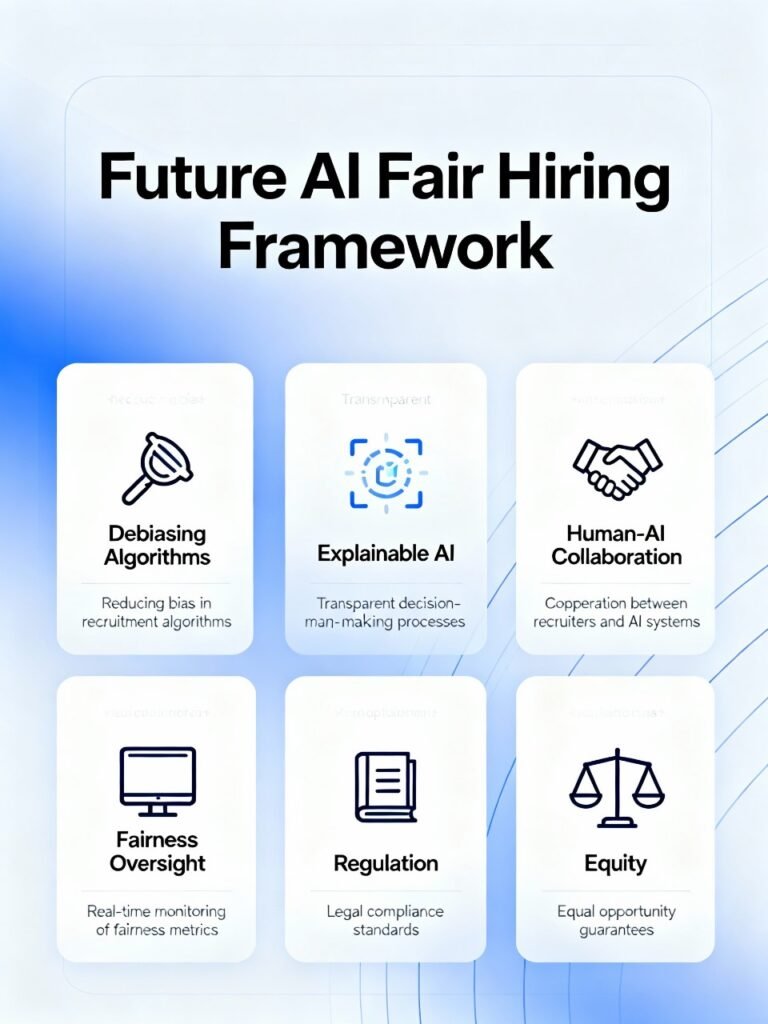

Future research focuses on fairness aware machine learning and real time bias detection. Explainable AI will play a key role in building trust and regulatory compliance. Rather than full automation hybrid human AI decision making is likely to dominate.

AI may never deliver perfect fairness but with responsible design regulation and oversight it can make hiring more equitable than ever before. Addressing AI bias in hiring is therefore an ongoing process not a one time solution.

Follow our Social Media: Tech 2 Innovation (@Tech2Innovate) / X

Pingback: Close the Education Gap Can Artificial Intelligence 2025